AI Influencer 2026

agentic AI systems, AI action agents, AI agent framework, AI automation platforms, AI business automation, AI deployment challenges, AI governance models, AI infrastructure tools, AI orchestration systems, AI security risks, AI system architecture, AI task execution software, AI workflow automation, autonomous AI agents, autonomous workflow software, enterprise AI agents, future of AI automation, human in the loop AI, next generation AI tools, OpenClaw AI

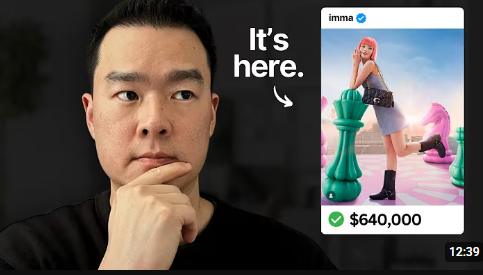

AI Influencer

0 Comments

OpenClaw Is Broken — Why That’s a Sign Autonomous AI Agents Are the Future

Autonomous AI agents are the next evolution of artificial intelligence. And tools like OpenClaw are showing us exactly where that future is headed — including the messy parts.

If you’ve been following AI automation trends, you’ve probably noticed a shift. We’re moving beyond chatbots that answer questions. The new wave of AI doesn’t just respond — it takes action.

That’s where OpenClaw comes in.

And no, it’s not really “broken.”

It’s early.

What Is OpenClaw?

OpenClaw is an autonomous AI agent framework designed to execute tasks, interact with software, and automate workflows without constant human input.

Instead of simply generating text like traditional conversational AI, autonomous AI agents like OpenClaw can:

- Run multi-step workflows

- Interact with files and applications

- Trigger actions across systems

- Chain tasks together automatically

- Operate with partial independence

This represents a major shift in AI development.

We are entering the era of agentic AI systems — software that acts like a digital operator rather than a digital assistant.

What Are Autonomous AI Agents?

To understand why this matters, let’s define the term clearly.

Autonomous AI agents are AI systems that can:

- Interpret a goal

- Break it into steps

- Execute those steps using tools or APIs

- Adjust based on outcomes

Unlike chat-based AI tools, which stop after giving you an answer, AI task execution software continues working until the objective is complete.

That’s the difference between:

- AI that gives advice

- AI that performs the work

This is why AI workflow automation is becoming one of the fastest-growing areas in artificial intelligence.

Why OpenClaw Feels “Broken”

The phrase “OpenClaw is broken” doesn’t mean it doesn’t function.

It highlights the tension between power and safety in autonomous systems.

When an AI agent can:

- Send emails

- Modify files

- Execute commands

- Access software

- Automate decision chains

…it introduces new risks.

1. AI Security Risks

Granting high-level access to an AI agent creates serious security concerns. Without proper guardrails, permission systems, and sandboxing, autonomous agents can misfire.

Text errors are annoying.

Action errors are expensive.

2. AI Deployment Challenges

Enterprise AI agents require:

- Audit logs

- Role-based permissions

- Compliance tracking

- Execution transparency

Many early AI automation platforms focus on capability before governance.

That creates friction when moving from demo to production.

The Shift From Chatbots to AI Action Agents

The evolution of AI can be broken into three stages:

Stage 1: Information AI

Search engines and static Q&A systems.

Stage 2: Conversational AI

ChatGPT-style systems generating content and code.

Stage 3: Agentic AI Systems

AI that executes tasks and automates workflows.

OpenClaw sits squarely in Stage 3.

And this stage changes how businesses think about AI infrastructure.

Why Autonomous AI Agents Matter for Businesses

Autonomous workflow software can dramatically reduce operational overhead.

Here’s what AI business automation can enable:

- Automated research and reporting

- Content production pipelines

- CRM updates and sales outreach

- DevOps task execution

- Data extraction and system syncing

Instead of hiring for repetitive digital labor, companies can deploy AI orchestration systems.

The value is no longer in getting better answers.

It’s in automating execution.

The Real Future of AI Automation

The future of AI automation isn’t fully autonomous systems running unchecked.

It’s structured autonomy.

The winning model looks like this:

Human sets objective →

AI agent executes steps →

System logs actions →

Human reviews and adjusts →

Workflow improves over time

This is known as human-in-the-loop AI, and it’s likely to define enterprise adoption.

Autonomous AI agents will operate within guardrails, not without them.

OpenClaw as a Signal, Not a Failure

Every major technological shift goes through instability.

Early cloud systems were unreliable.

Early mobile apps were insecure.

Early web platforms were chaotic.

Autonomous AI agents are at that same stage.

OpenClaw exposes architectural and security gaps by pushing capability forward.

And that exposure is necessary.

It forces better:

- AI governance models

- Security frameworks

- Agent permission systems

- Execution controls

“Broken” is often just a sign that the system is ahead of the safeguards.

The Bottom Line

OpenClaw is not broken in the sense that it doesn’t work.

It’s pushing the boundaries of what autonomous AI agents can do—and exposing the challenges of AI task execution software.

The future of AI is not just smarter chat.

It’s AI infrastructure.

It’s AI workflow automation.

It’s AI action agents operating inside structured systems.

Autonomous AI agents are the next platform layer in artificial intelligence.

And like every new platform shift, the early versions look unstable — until they become standard.

Post Comment